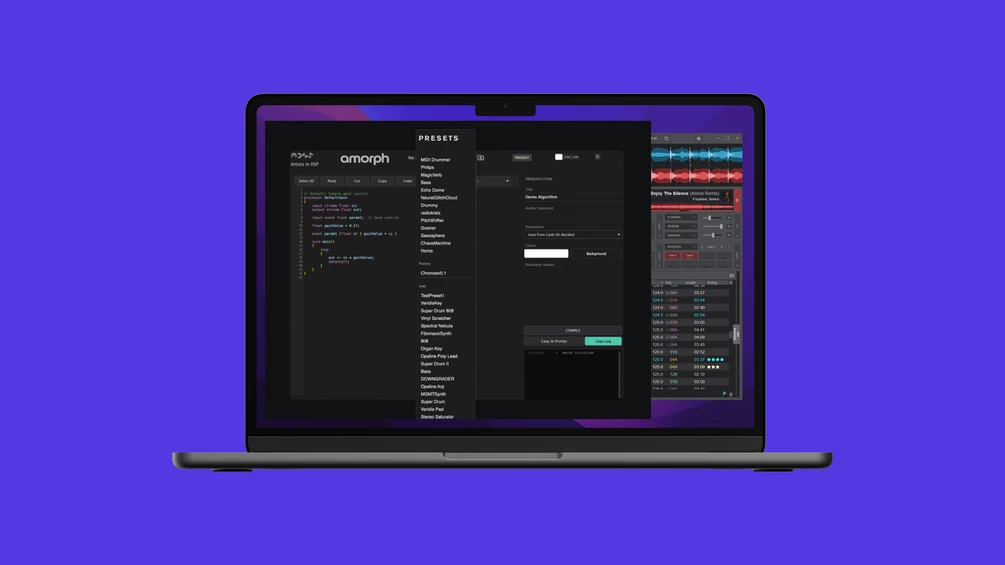

HangupsMusic.com – The landscape of digital music production is currently undergoing a seismic shift as generative artificial intelligence moves beyond simple MIDI patterns and vocal synthesis into the foundational architecture of sound itself. At the forefront of this evolution is a new release from the developer collective Artists in DSP. Their latest offering, an open-beta plugin titled Amorph, represents a significant milestone in the democratization of software development for musicians. By allowing users to transform natural language text prompts into fully functional digital signal processing (DSP) tools, Amorph is effectively stripping away the traditional barriers of entry that have long separated sound designers from software engineers.

The core philosophy behind Amorph is the concept of "freedom through accessibility." For decades, the creation of custom audio effects and virtual instruments was a niche discipline reserved for those with a deep understanding of programming languages such as C++, Juce, or Faust. While platforms like Max/MSP and Pure Data offered a visual bridge for the mathematically inclined, the leap from an artistic idea to a compiled, usable plugin remained daunting for the average producer. Amorph changes this dynamic by leveraging the power of Large Language Models (LLMs), such as ChatGPT, to act as an intermediary. Instead of writing lines of code, the user describes the desired behavior of a plugin in plain English—or any language the LLM understands—and the software handles the heavy lifting of translating that intent into executable DSP code.

The release is bifurcated into two distinct modules to cater to different stages of the production pipeline. The first, Amorph Instrument, is designed for the generation of sound from scratch. This module allows users to manifest everything from classic subtractive synthesizers and FM oscillators to more experimental textures like generative noise landscapes, granular engines, and evolving drone machines. By simply describing the character of the sound—for instance, "a dual-oscillator synth with a drifting pitch and a resonant low-pass filter"—the plugin generates the underlying architecture and presents the user with a customized interface.

The second module, Amorph Effect, focuses on the manipulation of existing audio signals. This is where the creative potential for mixing and sound design becomes particularly potent. Producers can request specific processing chains, such as "a vintage-style plate reverb with a shimmering high-end tail" or "a bit-crushing distortion that reacts to the input dynamics." The system interprets these descriptors, writes the necessary algorithms, and compiles a functional effect unit in real-time. This capability effectively turns a DAW into a laboratory where the only limit is the user’s ability to articulate their sonic vision.

One of the most striking features of the Amorph ecosystem is its automated interface generation. In traditional plugin development, designing a User Interface (UI) can be as time-consuming as writing the audio engine itself. Amorph bypasses this hurdle by dynamically creating a control panel for every parameter generated by the code. When the LLM identifies a variable that should be user-controllable—such as a filter cutoff, a delay feedback amount, or an oscillator waveform—Amorph automatically maps that variable to a physical knob on the plugin’s dashboard. This ensures that the generated tools are not just static "black boxes" but interactive instruments that can be performed and automated within a session.

The technical requirements for Amorph reflect a commitment to broad compatibility, ensuring that the tool is accessible to a wide range of creators. The plugin is currently compatible with macOS 10.13 and later, as well as 64-bit versions of Windows 10 and 11. It integrates seamlessly with the industry’s leading Digital Audio Workstations (DAWs), including Ableton Live, Logic Pro, FL Studio, and Reaper. Given its status as an open beta, the installation process involves a manual setup that is detailed in the developer’s documentation and accompanying video tutorials. While this might require a few extra steps compared to a standard installer, it provides users with a closer look at the modular nature of the software they are about to employ.

The origin story of Artists in DSP adds a layer of academic and artistic depth to the project. Founded in 2022 by two former sound arts students, the collective was born out of a desire to explore the "outer limits" of music technology. Their mission statement emphasizes a dedication to expanding the boundaries of sound arts and reimagining the creative process. By releasing Amorph as a free open beta, they are not just providing a tool; they are fostering a community-driven experiment to see how the collective intelligence of musicians can push the boundaries of AI-generated DSP.

The implications of this technology for the music industry are profound. We are entering an era where the "preset" is no longer a static file saved by a developer, but a living conversation between a human and an algorithm. For independent artists, this means the ability to create bespoke tools that provide a unique sonic signature, previously unattainable without hiring a developer or spending years learning to code. For educational institutions, Amorph serves as a powerful bridge, allowing students to see the immediate results of DSP concepts without getting bogged down in syntax errors.

Furthermore, Amorph addresses a common critique of AI in the arts: the fear that automation will lead to homogenization. Because Amorph relies on specific user prompts, the output is inherently tied to the user’s vocabulary, imagination, and intent. Two producers asking for a "dark delay" might receive entirely different algorithms based on how they choose to elaborate on that request. This places the emphasis back on the producer’s role as an architect of sound, rather than a mere consumer of pre-packaged software.

However, the transition to AI-driven development is not without its challenges. As an open beta, Amorph is a work in progress. The quality of the generated plugins is heavily dependent on the clarity of the prompts and the current capabilities of the LLMs being utilized. There is also the matter of computational efficiency; AI-generated code may not always be as optimized as code handwritten by a seasoned engineer. Yet, these are the types of hurdles that beta testing is designed to address. By putting the tool into the hands of thousands of producers, Artists in DSP can gather the data necessary to refine the translation process and improve the stability of the generated DSP.

As we look toward the future, the release of Amorph may be remembered as a turning point in music software. It signals a shift away from the "black box" model of plugin design toward an open, conversational approach. It empowers the user to be a creator of their own tools, blurring the lines between the musician and the instrument maker. In a world where digital tools can often feel rigid and prescriptive, Amorph offers a glimpse into a more fluid, intuitive, and personalized future for sound design.

For those eager to experiment with this new frontier, the open beta of Amorph is currently available for download via the Artists in DSP Gumroad page. The developers encourage users to share their creations and feedback, contributing to the ongoing evolution of the platform. As the project matures, it will undoubtedly spark further discussion regarding the ethics, aesthetics, and technicalities of AI in music, but for now, it stands as a testament to the creative "freedom" that occurs when complex technology is made simple. Whether you are looking to build a one-of-a-kind synthesizer or a specialized processing tool for a specific mix, Amorph provides the canvas and the code, requiring only a few words to bring a new sonic reality into existence.